Blazingly Fast Load Times For Apps Built with Ext JS and Sencha Touch

Get a summary of this article:

For years, web developers have deployed their web applications according to the best practices defined by Yahoo’s Exceptional Performance team. Because traditional applications culminate in a vast collection of JavaScript source files, CSS stylesheets, and image artifacts, the best practices mandate the collective compression of these assets into single file downloads. In theory, delivering a single JavaScript file and a single CSS file reduces the overall number of HTTP requests (as well as reduces total asset size) resulting in optimal transmission and improved page initialization times.

For years, web developers have deployed their web applications according to the best practices defined by Yahoo’s Exceptional Performance team. Because traditional applications culminate in a vast collection of JavaScript source files, CSS stylesheets, and image artifacts, the best practices mandate the collective compression of these assets into single file downloads. In theory, delivering a single JavaScript file and a single CSS file reduces the overall number of HTTP requests (as well as reduces total asset size) resulting in optimal transmission and improved page initialization times.

Although there are a variety of publicly available tools to accomplish these basic tasks, none offers the convenience, dependency management, and build automation capabilities of Sencha Cmd for applications built with Ext JS and Sencha Touch. By simply running sencha app build, you only deploy the subset of the framework code that you actually need. When combined with your application code, the output is an optimized production build.

Typically small to medium size applications benefit the most from this build and deployment strategy, but the enterprise developer often must consider additional assembly factors. For example:

- Enterprise desktop applications frequently involve hundreds of input forms and other complex presentation layouts. If incredibly large enterprise applications can exceed 8MB of JavaScript, CSS and images, does compressing everything into a single file really make a difference?

- Role-based applications also present interesting engineering problems. Consider a “low level” user might only have 10% of functionality available to them — why should they download the other 90% of the code? Should the enterprise even risk serving them the compressed code for which they don’t have access privileges?

Clearly, the best practices surrounding this approach to minification plateau when applications get sufficiently large and/or complicated. Page initialization begins degrading at this scale because simply loading and parsing this much JavaScript (without even executing it) can freeze the browser.

There was no immediate solution to this problem, so the Sencha Professional Services team recently developed an approach that helped one of our customers. While it doesn’t solve every problem faced when building large enterprise applications, we wanted to share this solution, which leverages Sencha Cmd to dynamically load JavaScript resources on-demand.

You can view the project files used in this article on GitHub.

Wait – Isn’t Dynamic JS Loading Slow?

It can be — it depends on a few factors. Looking back at those best practices defined by Yahoo, the overall point is that reducing HTTP requests should decrease page loading times because most browsers have a default limit on the number of concurrent HTTP connections per domain (typically six). So, while in theory this means a single file would download faster than ten, it doesn’t necessarily mean a single large file will download faster than six smaller files in parallel.

First, we should consider that Ext JS and Sencha Touch applications built with Sencha Cmd have two environments: “development” and “production”. Sencha apps in “development” mode load each individual class based on their dependency chains (through the “requires” configuration), whereas apps in “production” mode would load a single “my-app-all.js” file containing only the necessary dependencies.

The approach we examined to dynamically load JavaScript code in production combines the idea of serving compressed “builds” (from “production” mode) with the flexibility of individual class loading (from “development” mode). Ultimately, we’re trying to improve the time it takes for an application to load, because application “speed” is all about the perception that the user does not wait for the UI.

Proposed Solution: Reduce JS File on Initial Load

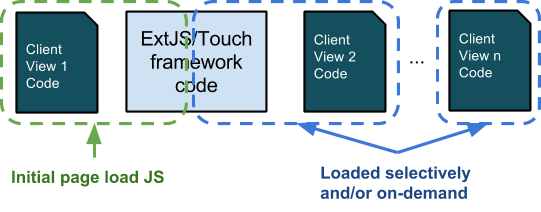

Consider the above diagram. In a large enterprise application, only a fraction of the total code base is displayed or used on the initial screen. To achieve the best initial page load performance, it would be ideal to deliver only the code that’s needed for the initial screen. As long as the application loads quickly and the users are happy, we can selectively choose how and when to request other parts of the code base to access subsequent functionality.

Our approach to solving this problem was solely focused on improving the initial load time for a customer application — it’s important to note that this approach was specifically tailored to this specific situation and may not fit all scenarios. We did not tackle ideas surrounding selective CSS deployment, lazy-initializing controllers, or asynchronous loading mechanisms.

But, before we dive into the details of our approach, let’s first reiterate where this technique is appropriate:

- Achieving faster load times for the initial “front page” view.

- Has biggest impact in low power mobile devices (e.g. Android 2.x).

- Improves initial and subsequent “front page” view load times; both benefit from parsing less JS code, and subsequent loads typically come from the cache (if set up properly).

- Serving up JS code selectively

- Supports security-focused policies of not serving code to users who don’t have permissions to run it anyway.

- Appropriate for very large (3MB+) applications where only a small portion of the code base would get used in a given user session.

Sample App Setup

You can view the project files used in this article on GitHub.

Using Sencha Cmd, you can issue a basic “sencha generate app” command for Sencha Touch 2.3.1 apps which produces a basic project with a 2-tab tab panel. Let’s call it “ChunkCompile”. For this example, we will modify the second tab, so it contains a List and a custom component whose definitions will be downloaded only after the user navigates to the second tab.

In app/view/Main.js we can see the items array contains the following:

items: [{

xtype : ‘container’,

title : ‘Welcome’,

iconCls : ‘home’,

scrollable : true,

html : ‘Switch to next card for dynamic JS load…’,

items: {

docked : ‘top’,

xtype : ‘titlebar’,

title : ‘Welcome to Sencha Touch 2’

}

},{

xtype : ‘container’,

title : ‘NEXT CARD’,

itemId : ‘nextCard’,

iconCls : ‘action’,

layout : ‘fit’,

items: [{

docked : ‘top’,

xtype : ‘titlebar’,

title : ‘”Next Card”‘

}]

}]

Note that the second item is merely a Container with a TitleBar. This functions as a placeholder for “Page 2” in the following sequence:

- Intercept “Tab Change” event for the second tab.

- Download JavaScript content needed for Page 2.

- Add xtype “page2” into the second tab (we’ll define this view in a moment).

Loading JS & Views Dynamically

Pretend for a moment that we already have a “page2.js” file ready to go, compressed and containing only the code related to the “page2” widget. How would we load it dynamically and display the associated view?

Fortunately, we have the Ext.Loader.loadScriptFile() method. We will use this utility in app/controller/Main.js:

Ext.define(“ChunkCompile.controller.Main”, {

//…

onMainActiveCardChange: function(mainPanel, newItem, oldItem) {

// Skip if 2nd page loaded already

if (newItem.down(‘page2’)) {

return;

}

// If this is a built app, we need to load page2 JS dynamically

if (ChunkCompile.isBuilt && !ChunkCompile.view.Page2) {

// synchronously load JS

Ext.Loader.loadScriptFile(

‘page2.js’,

function() {

console.log(‘SUCCESS’);

},

function() {

console.log(‘FAIL’);

},

this,

true

);

}

// Show “Page 2” view

newItem.add({ xtype: ‘page2’ });

}

});

What’s happening here can be boiled down to the following sequence:

- We intercept the “activeitemchange” event of the “main” view as the user changes tabs.

- We use a conditional statement to determine whether we need to load the “Page 2” JS dynamically:

- “ChunkCompile.isBuilt” is a flag we inject during the build process (discussed later); we do not utilize the custom “dynamic JS load” pattern in dev mode because there are no compiled files to work with yet.

- “ChunkCompile.view.Page2” is a check for the actual presence of Page2 view definition, which would only exist if its JS definitions had been loaded.

- Note that this logic is arbitrary; the example presented is a very basic way of checking when it’s time to load Page2 JS.

- If all checks pass, we call Ext.Loader.loadScriptFile(‘page2.js’, …); note the “true” parameter at the end for synchronous execution.

- Finally, we add the view to the 2nd tab using its xtype “page2”.

Views

Now that we understand how we plan to load our packages dynamically, let’s have a quick look at the Page2 and CustomComponent classes to see how they’re defined. For this example, we deliberately made these classes simple, yet we also wanted to demonstrate this technique using multiple files.

// @tag Page2

Ext.define(‘ChunkCompile.view.Page2’, {

extend : ‘Ext.Container’,

xtype : ‘page2’,

requires : [

‘Ext.layout.VBox’,

‘Ext.List’,

‘ChunkCompile.view.CustomComponent’

],

config: {

layout : ‘vbox’,

items : [{

xtype: ‘customcomponent’

},{

xtype : ‘list’

}]

}

});

// @tag Page2

Ext.define(‘ChunkCompile.view.CustomComponent’, {

extend : ‘Ext.Component’,

xtype : ‘customcomponent’,

config : {

html: ‘Hello! This view was loaded dynamically!’

}

});

Clearly, there’s nothing special about these classes — but you should note the “// @tag Page2” comments at the top of both files (which doesn’t exist on the Main view). We will refer to this shortly when running the build.

Building page2.js vs. all-the-rest.js

One of the best kept secrets of Sencha Cmd is the powerful and flexible concatenation functionality of the “sencha compile” command. This functionality is explained in-depth in a guide: Multi-Page and Mixed Apps. In a nutshell, you can produce different code combinations to be saved into separate JavaScript files by using a powerful and flexible querying mechanism. In the following example, we will leverage source code comment searching and class namespace filtering.

The following command can be executed from our app’s directory:

sencha compile

union --recursive --file=app.js and

save allcode and

exclude --all and

include --tag Page2 and

include --namespace Ext.dataview and

save page2 and

concat -y build/chunked/page2.js and

restore allcode and

exclude --set page2 and

concat -y build/chunked/rest-of-app-and-touch.js

Running this command produces two files:

- build/chunked/page2.js

- build/chunked/rest-of-app-and-touch.js

This is exactly what we need for our approach to dynamic loading. Next, let’s explore how to modify sencha compile within our existing build process.

If you are curious to learn more about the options used by sencha compile, be sure to check out the Sencha Compiler Reference and Multi-Page and Mixed Apps guides in the Ext JS documentation.

Integrating Into Build Process

Note that we have used the command “sencha compile” instead of normal “sencha app build” – so some of the usual build steps (e.g. CSS generation) were not executed. Ultimately, we want to automate this as part of the normal build process, so we will need to inject our custom options for the “sencha compile” command line call whenever we run “sencha app build”. How can we accomplish that?

Take a look at the “-compile-js” Ant target in .sencha/app/js-impl.xml. Note the high-level structure:

<target name="-compile-js" depends="-detect-app-build-properties">

<if>

<x-is-true value="${enable.split.mode}"/>

<then>

<x-compile refid="${compiler.ref.id}">

...

</x-compile>

</then>

<else>

HERE

</else>

</if>

</target>

The “sencha compile” command is called where I highlighted HERE, and I have modified the original parameters to fit the expected Ant format:

<x-compile refid="${compiler.ref.id}">

<![CDATA[

restore

page

and

${build.optimize}

and

save

allcode

and

exclude

-all

and

include

-tag=Page2

and

include

--namespace=Ext.dataview

and

concat

-y

-out=${build.dir}/page2.js

${build.concat.options}

and

restore

allcode

and

exclude

-tag=Page2

and

exclude

--namespace=Ext.dataview

and

concat

${build.compression}

-out=${build.classes.file}

${build.concat.options}

]]>

</x-compile>

<echo file="${build.classes.file}" append="true">

ChunkCompile.isBuilt = true;

</echo>

Note that we echo out “ChunkCompile.isBuilt = true;” at the very bottom to the main app.js file. Recall that we used this value as a test condition in our controller to ensure we only run the dynamic JS loading in “production” mode.

Resulting File Sizes

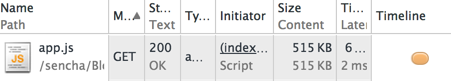

Prior to editing js-impl.xml with our changes, we can see that calling sencha app build package on our sample application caused it to output a single JS file at 515 KB. This only included the necessary parts of the framework plus our application code.

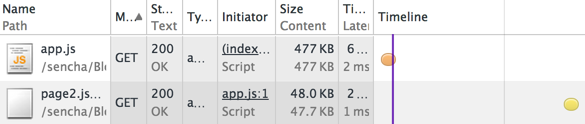

After implementing the dynamic loading technique, we can see that the initial load of app.js drops to 477 KB — a savings of almost 10%. The subsequent load of page2.js (when the user navigates to the second tab) is a mere 48 KB and happens almost instantly.

While the code used in our sample application is trivial, the savings on load time add up very quickly. I was recently involved in building a large financial app that had over 3MB of application code running on a low powered mobile device. Using the technique presented here, we were able to reduce the original five second load time to just under one second. The best part is that the sky’s the limit — no matter how large the app gets, this technique will scale with it.

Conclusion

With more and more large HTML applications being developed via the Single Page App paradigm, it’s clear that time-tested best practices for website development fall short for large enterprise web applications. The sheer amount of JavaScript required to build these types of applications has grown exponentially in recent years, and unfortunately the tooling to control it has lagged behind. Sencha Cmd allows you to solve this problem and has many more customizable features to tweak the way your code is compressed and segmented.

While this approach may not help you to solve every problem that you face when building a large enterprise application, we hope it might resolve some scenarios. If you have dealt with similar issues in your applications, please tell us about your experiences in the comments below. We would love to hear what solutions you have created.

If you you would like to explore this approach or others for your particular situation, please contact Sencha Professional Services for a consultation.

Enterprise software development in 2026 demands a different approach than consumer application development. Enterprise teams…

JavaScript frameworks and libraries serve different purposes in enterprise development. Frameworks such as Ext JS…

UI frameworks in 2026 are defined by three significant shifts: deeper integration of AI-related components…