5 Myths About Mobile Web Performance

Recently we’ve heard some myths being repeated about mobile HTML performance that are not all that accurate. Like good urban myths, they sound compelling and plausible. But these myths are based on incorrect premises, misconceptions about the relationship between native and web software stacks and a scattershot of skewed data points. We thought it was important to address these myths with data that we’ve collected over the years about performance, and our own experiences doing optimizations of mobile web app performance.

Recently we’ve heard some myths being repeated about mobile HTML performance that are not all that accurate. Like good urban myths, they sound compelling and plausible. But these myths are based on incorrect premises, misconceptions about the relationship between native and web software stacks and a scattershot of skewed data points. We thought it was important to address these myths with data that we’ve collected over the years about performance, and our own experiences doing optimizations of mobile web app performance.

### TL;DR

#### Myth #1: Mobile web performance is mostly driven by JavaScript performance on the CPU

Reality: Most web performance is constrained by the optimization of rendering pipelines, speed of DOM interactions and degree of GPU acceleration. Faster JavaScript is always helpful, but it’s rarely critical.

#### Myth #2: CPU-Bound JavaScript has only become faster because of hardware improvements (aka Moore’s Law)

Reality: 50% of mobile JavaScript performance gains over the last four years has come from software improvements, not hardware improvements. Even single-threaded JavaScript performance is still improving, without considering that most app devs are using Web Workers to take advantage of multi-threading where possible.

#### Myth #3: Mobile browsers are already fully optimized, and have not improved much recently

Reality: Every mobile browser has a feature area where it outperforms other browsers by a factor of 10–40x. The Surface outperforms the iPhone on SVG by 30x. The iPhone outperforms the Surface on DOM interaction by 10x. There is **significant** room to further improve purely from matching current best competitive performance.

#### Myth #4: Future hardware improvements are unlikely to translate into performance gains for web apps

Reality: Every hardware generation in the last three years has delivered significant JavaScript performance gains. Single thread performance on mobile will continue to improve, and browser makers will advance the software platform to take advantage of improved GPU speeds, faster memory buses & multi-core through offloading and multi-threading. Many browsers already take advantage of parallellism to offload the main UI thread, for example: Firefox offloads compositing; Chrome offloads some HTML parsing; and IE offloads JavaScript JIT compiliation.

#### Myth #5: JavaScript garbage collection is a performance killer for mobile apps

Reality: This is trueish but a little outdated. Chrome has had an incremental garbage collector since Chrome 17 in 2011. Firefox has had it since last year. This reduced GC pauses from 200ms down to 10ms — or a frame drop vs, a noticeable pause. Avoiding garbage collection events can deliver significant improvements to performance, but it’s usually only a killer if you’re using desktop web dev patterns in the first place or on old browsers. One of the core techniques in Fastbook, our mobile HTML5 Facebook clone, is to re-cycle a pool of DOM nodes to avoid the overhead of creating new ones (and the associated overhead of GC collections on old ones). It’s very possible to write a bad garbage collector (cf. old Internet Explorers) but there’s nothing intrinsically limiting about a garbage collected language like JavaScript (or Java).

### Ok: The Essentials

First, let’s remind ourselves of the basics. From 50,000 feet, the browser is a rich and complex abstraction layer that runs on top of an Operating System. A combination of markup, JavaScript, and style-sheets, uses that abstraction layer to create application experiences. This abstraction layer charges a performance tax, and the tax varies depending on what parts of the abstraction layer that you’re using. Some parts of the abstraction layer are faster because they match the underlying OS calls or system libraries closely (aka Canvas2D on MacOS). Some parts of the abstraction layer are slower because they don’t have any close analog in the underlying OS, and they’re inherently complicated (DOM tree manipulation, prototype chain walking).

“At Sencha, we know that a good developer can create app experiences that are more than fast enough to meet user expectations using mobile web technologies and a modern framework like Sencha Touch.”

Very few mobile apps are compute intensive: no-one is trying to do DNA sequencing on an iPhone. Most apps have a very reasonable response model. The user does something, then the app responds visually with immediacy at 30 frames per second or more, and completes a task in a few hundred milliseconds. As long as an app meets this user goal, it doesn’t matter how big an abstraction layer it has to go through to get to silicon. This is one of the red herrings we’d like to cook and eat.

At Sencha, we know that a good developer can create app experiences that are more than fast enough to meet user expectations using mobile web technologies and a modern framework like Sencha Touch. And we’ve been encouraged by the performance trends on mobile over the last 3 years. We’d like to share that data with you in the rest of the post.

It’s not our intention to claim that mobile web apps are **always** as fast as native, or that they are always comparable in performance to desktop web apps. That wouldn’t be true. Mobile hardware is between 5x and 10x slower than desktop hardware: the CPU’s are less powerful, the cache hierarchy is flatter and the network connection is higher latency. And any layer of software abstraction (like the browser) can charge a tax. This is not just a problem for web developers. Native iOS developers can tell you that iOS CoreGraphics was too slow on the first Retina iPad, forcing many of them to write directly to OpenGL.

### Digging Deeper Into the Myths

Having worked for three years on optimization in Sencha Touch for data driven applications, we can say with confidence that we’ve rarely been stymied by raw JavaScript performance. The only significant case so far has been the Sencha Touch layout system, which we switched over to Flexbox after finding JavaScript to be too slow on Android 2.x.

Far more often, we’ve run into problems with DOM interactions, the browser rendering engine and event spam. All of these are limitations created by the architects and developers of each browser, and nothing to do with the inherent characteristics of JavaScript or JavaScript engines. As one example, when we’ve worked with browser makers on performance optimization, we’ve seen a 40x improvement in one of the browser operations (color property changes) that bottleneck our scrolling list implementation, as just one example.

### JavaScript Performance on iOS & Android

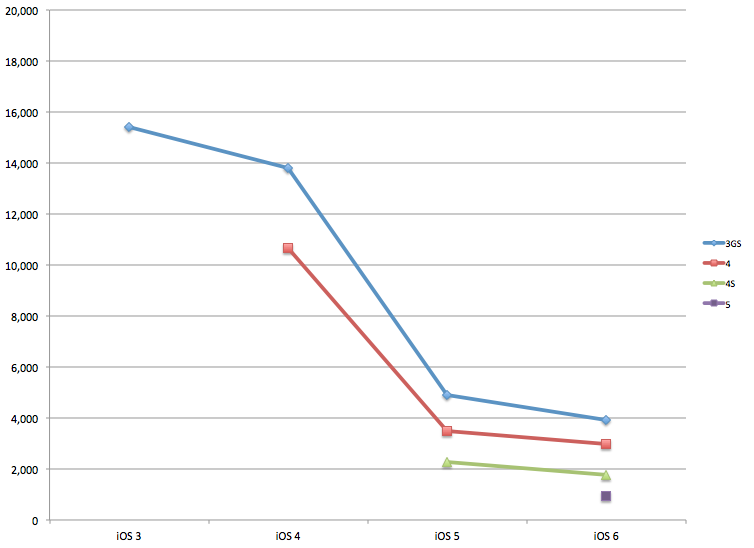

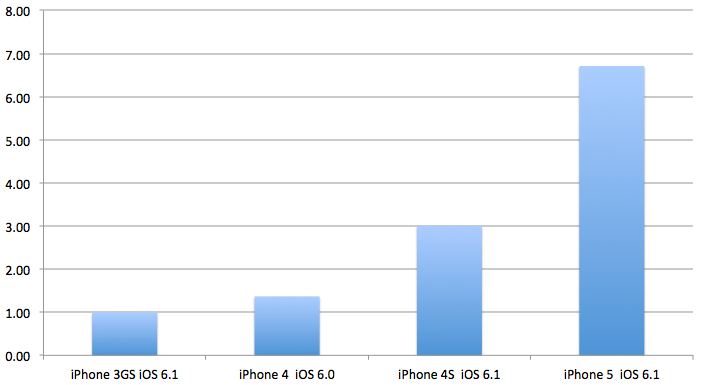

Although we’ve said JavaScript performance isn’t that important on mobile, we want to demolish the myth that it’s not improving. Below is a graph showing a four year history of SunSpider scores on iOS (lower is better) by model and by iOS version. (Luckily, SunSpider is a very widely used test, so there are records all over the web of testing performed on older iOS versions.) In 2009, the original iPhone 3GS running iOS3 had a score of over 15,000 — quite a poor performance, and about a 30x performance gap vs. the 300–600ish score of desktop browsers in 2009.

However, if you upgraded that iPhone 3GS to iOS4, 5 and 6, you’d have experienced a 4x improvement in JavaScript performance on the same exact hardware. (The big jump in performance between iOS 4 and iOS5 is the Nitro engine.) The continual gains in SunSpider continue in iOS7, but we’re still under NDA about those. Compared with today’s desktop browsers, edge mobile browsers are now about 5x slower — a big relative gain vs. 2009’s 30x gap.

For even more detail on hardware and software advances in iOS hardware, see Anandtech’s review from last October.

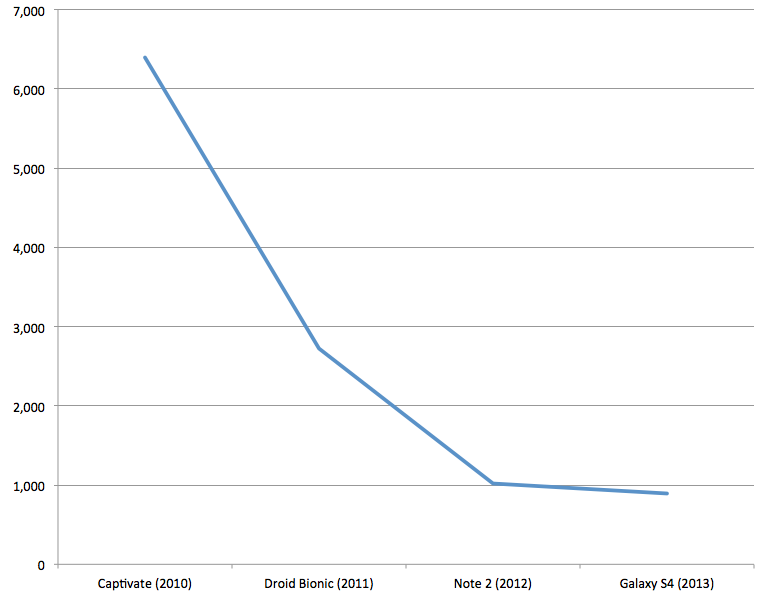

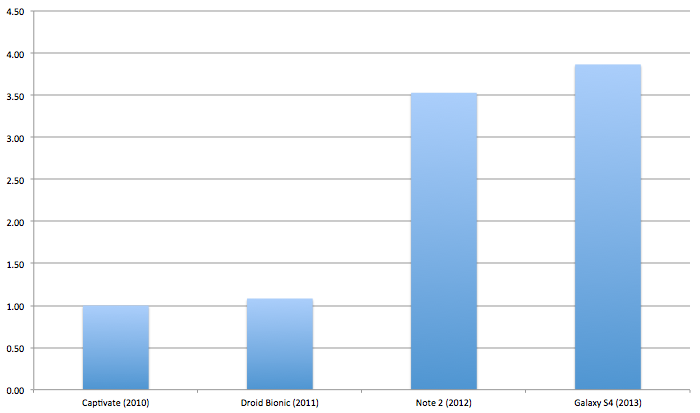

There have been similar levels of improvement in the Android platform. From our testing lab, we assembled a collection of Android platforms from the last three years that we believe represent typical high end performance from their time. The four phones we tested were:

* Samsung Captivate Android 2.2 (Released July 2010)

* Droid Bionic Android 2.3.4 (Released September 2011)

* Samsung Galaxy Note 2 Android 4.1.2 (Released September 2012)

* Samsung Galaxy S4 Android 4.2.2 (Released April 2013)

As you can see below, there has also been a dramatic improvement in SunSpider scores over the last four years. The performance jump from Android 2.x to Android 4.x is a 3x improvement.

In both cases, the improvement is better than what we’d expect from Moore’s Law alone. Over 3 years, we’d expect a 4x improvement (2x every 18 months), so software is definitely contributing to the improvement in performance.

### Testing What Matters

As we previously mentioned, SunSpider has become a less interesting benchmark because it only has a faint connection with the performance of most applications. In contrast, DOM Interaction benchmarks as well as Canvas and SVG benchmarks can tell us a lot more about user experience. (Ideally, we would also look at measurements of CSS property changes as well as the frames per second of css animations, transitions and transforms — because these are frequently used in web apps — but there are still no hooks to measure all of these correctly on mobile.)

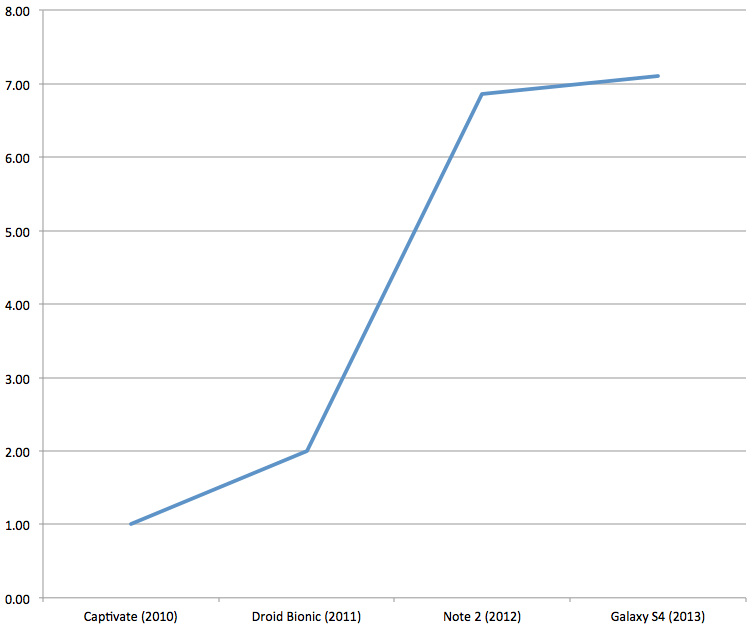

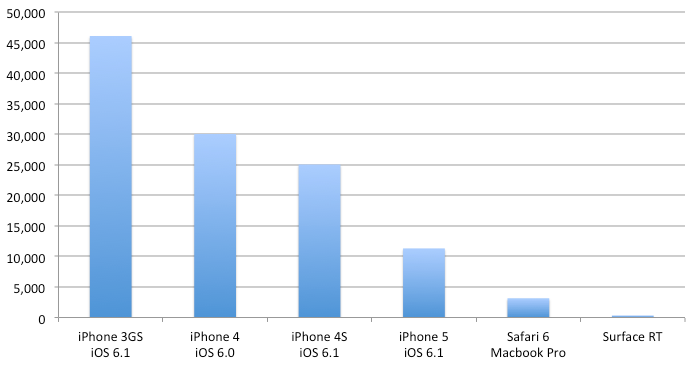

First DOM interaction tests: we used Dromaeo Core DOM tests as our benchmark. Below are the results from testing on our 4 Android models. We indexed all Core DOM results (Attributes, Modifications, Query, Traversal) to the Captivate’s performance, and then took the average of the 4 Core DOM indices.

As can be seen, there was a 3.5x performance improvement from Android 2.x to 4.x, although the S4 was only a minor improvement on the Note 2. We can also look at Dromaeo results on iOS. Sadly, we can’t do a comparison of performance across older versions of iOS, but we can show the steady improvement across multiple generations of iPhone hardware. Interestingly, the performance improvement even within iOS6 across these device generations is better than the increase in CPU speed, which means that improvements in memory bandwidth or caching must be responsible for the better than Moore’s Law performance increase.

In order to show that there is still a lot of potential for browsers to match each other’s performance, we also benchmarked the Surface RT. The poor performance of DOM interactions in IE has always been a source of performance frustration, but it’s worth pointing once again the large performance gap in DOM interaction performance on the iPhone vs. the Microsoft Surface RT running IE10. One of the myths we’d like to destroy is that the mobile software stack is as good as it gets. This is not true for Windows RT- a 10x performance gap is waiting to be filled. (We’ll show where iOS falls down in a later benchmark.)

### Graphics Benchmarks

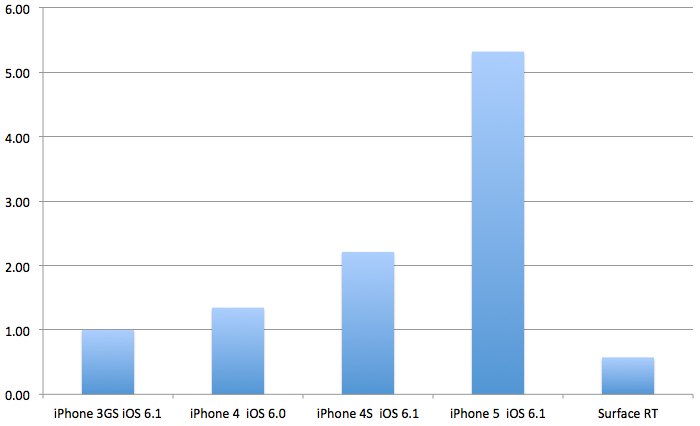

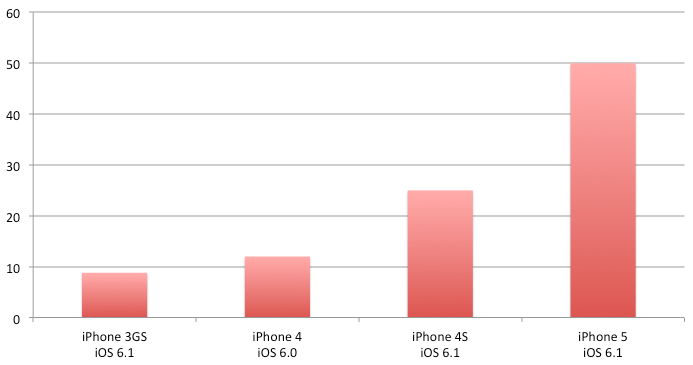

In addition to significant improvements in JavaScript and DOM interaction, we also wanted to show improvements in Canvas and SVG Performance. In iOS5, we previously found that Canvas2D performance increased by 5–8x on the same hardware vs. iOS4. [Some micro-benchmarks ran 80x faster on the iPad 2 when upgraded to iOS5](/blog/apple-ios-5-html5-developer-scorecard/). And because Canvas is closely bound to CoreGraphics on the iPhone, when native graphics gets faster, so does Canvas. In this round of testing, we used the mindcat Canvas2D benchmark to measure performance. Here, we see a substantial increase in Canvas performance on multiple generations of iPhone hardware — all running the same generation of iOS.

And remember, this is on top of a big performance gain from iOS4 to iOS5. As can be seen, the performance improvement in Canvas2D performance (7x) is better than the CPU improvement over the same time period (4x) and reflects the improved GPU and GPU software stack on the iPhone over time. GPU improvements and the increased use of GPU acceleration needs to be factored in to the increase in mobile web performance improvement.

When we look at the same tests on Android, we see an interesting data point — a lack of correlation between CPU performance and Canvas. The big change from Android 2 is that Android 2 had no GPU acceleration of Canvas at all. Again, a purely software change — GPU acceleration — is the big driver of performance improvement.

### SVG Benchmarks

SVG is another area that shows the broader story about web performance. Although not as popular as Canvas (largely because Canvas has become so fast), SVG has also shown solid performance improvements as hardware has improved. Below is a test from Stephen Bannasch that tests the time taken to draw a 10,000 segment SVG path. Once again, the test shows constant steady improvement across hardware generations as the CPU and GPUs improve (since these are all on iOS6).

The main gap in performance is in software: the Surface RT is more than **30x faster** than the iPhone 5 (or the iPad 4 — we also tested it but haven’t shown it.) In fact, the Surface RT is even 10x faster than Desktop Safari 6 running on my 1 year old Macbook! This is the difference that GPU acceleration makes. Windows 8/IE10 fully offloads SVG to the GPU, and the result is a huge impact. As browser makers continue to offload more graphics operations to the GPU, we can expect similar step-function changes in performance for iOS and Android.

In addition to long path draws, we also ran another SVG test from Cameron Adams that measures the frames per second of 500 bouncing balls. Again, we see constant performance improvement over the last four generations of hardware.

What’s more interesting than the improvement is the absolute fps. Once animations exceed 30 frames per second, you’ve exceeded the fps of analog cinema (24 fps) and met viewer expectations for performance. At 60 fps, you’re at the buttery smoothness of GPU accelerated quality.

### Real World Performance: Garbage Collection, Dynamic Languages and More

We hope that the preceding tour through mobile performance has demonstrated a few things, and demolished a few myths. We hope we’ve shown that:

* JavaScript performance continues to increase rapidly

* This performance increase is driven by both hardware and software improvements

* Although this is a “good thing”, much of app performance has nothing to do with JavaScript CPU performance

* Luckily, these other parts that impact performance are also improving rapidly, including DOM interaction speed, Canvas and SVG.

And although we could show it with high speed camera tests, all mobile web developers know that performance of animations, transitions and property changes has vastly improved since the days of Android 2.1, and they continue to get better each release.

So having slain a few false dragons, let’s mix metaphors and tilt at some real windmills. The last claim we’ve heard going around is that mobile web apps will **always be slow** because JavaScript is a dynamic language where garbage collection kills performance. There is definitely some truth in this. One of the benefits of using a framework like Sencha Touch, where DOM content is generated dynamically, is that we manage object creation and destruction as well as events at a level above the browser in the context of a specific UI component. This enables, for example, 60fps performance for data-backed infinite content (grids, lists, carousels) by recycling DOM content, throttling events and prioritizing actions.

Without this level of indirection, it’s very easy to produce poor performing mobile web app experiences — like the first generation mobile web app from Facebook. We believe that applications written on top of UI Frameworks like jQuery Mobile that are too closely tied to the underlying DOM will continue to suffer from performance problems for the foreseeable future.

### Bringing It All Together

There is a lot of data and a lot of different topics covered in this piece, so let me try to bring it all together in one place. If you’re a developer, you should take a few things away from this:

* Mobile platforms are 5x slower than desktop — with slower CPUs, more constrained memory and lower powered GPUs. That won’t change.

* Mobile JavaScript + mobile DOM access is getting progressively faster, but you should still treat the iPhone 5 as if it’s a Chrome 1.0 browser on a 2008-era desktop (aka 5–10x faster than desktop IE8).

* Graphics have also been getting much faster thanks to GPU acceleration and general software optimization. 30fps+ is already here for most use cases.

* Garbage collection and platform rendering constraints can still bite you, and it’s essential to use an abstracted framework like Sencha Touch to get optimal performance.

* Take advantage of the remote debugging and performance monitoring capabilities that the mobile web platforms: Chrome for Android now has a handly fps counter, and compositing borders can show you when content has been texturized and handed off to the GPU and how many times that texture is then loaded.

We hope that the review of performance data has been a helpful antidote to some of the myths out there. I want to thank everyone at Sencha who contributed to this piece including Ariya Hidayat for review and sourcing lots of links to browser performance optimizations, and Jacky Nguyen for detail on Sencha Touch abstractions and performance optimizations.

Are you facing issues with Ext JS applications’ performance as they scale up? Don’t worry!…

Dynamic forms are changing the online world these days. ExtJS can help you integrate such…

In modern software development, unit testing has become an essential practice to ensure the quality…

Rapid Ext JS (beta)

Rapid Ext JS (beta)